Basically title. I’m in the process of setting up a proper backup for my configured containers on Unraid and I’m wondering how often I should run my backup script. Right now, I have a cron job set to run on Monday and Friday nights, is this too frequent? Whats your schedule and do you strictly backup your appdata (container configs), or is there other data you include in your backups?

I’m always backing up with SyncThing in realtime, but every week I do an off-site type of tarball backup that isn’t within the SyncThing setup.

rsync from ZFS to an off-site unraid every 24 hours 5 times a week. on the sixth day it does a checksum based rsync which obviously means more stress so only do it once a week. the seventh day is reserved for ZFS scrubbing every two weeks.

Backups???

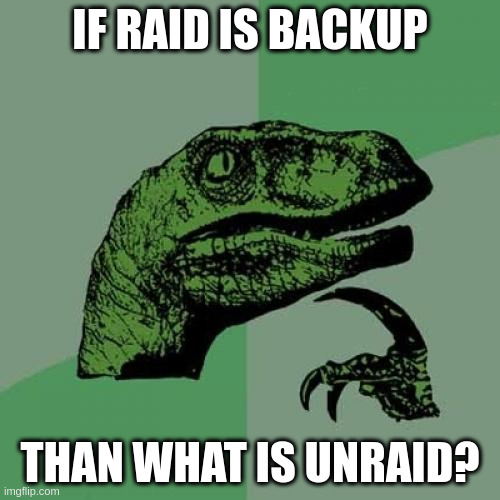

Raid is a backup.

That is what the B in RAID stands for.

Just like the “s” in IoT stands for “security”

If Raid is backup, then Unraid is?

I run Borg nightly, backing up the majority of the data on my boot disk, incl docker volumes and config + a few extra folders.

Each individual archive is around 550gb, but because of the de-duplication and compression it’s only ~800mb of new data each day taking around 3min to complete the backup.

Borgs de-duplication is honestly incredible. I keep 7 daily backups, 3 weekly, 11 monthly, then one for each year beyond that. The 21 historical backups I have right now RAW would be 10.98tb of data. After de-duplication and compression it only takes up 407.98gb on disk.

With that kind of space savings, I see no reason not to keep such frequent backups. Hell, the whole archive takes up less space than one copy of the original data.

+1 for borg

Original size Compressed size Deduplicated sizeThis archive: 602.47 GB 569.64 GB 15.68 MB All archives: 16.33 TB 15.45 TB 607.71 GB

Unique chunks Total chunksChunk index: 2703719 18695670

Once every 24 hours.

Proxmox servers are mirrored zpools, not that RAID is a backup. Replication between Proxmox servers every 15 minutes for HA guests, hourly for less critical guests. Full backups with PBS at 5AM and 7PM, 2 sets apiece with one set that goes off site and is rotated weekly. Differential replication every day to zfs.rent. I keep 30 dailies, 12 weeklys, 24 monthly and infinite annuals.

Periodic test restores of all backups at various granularities at least monthly or whenever I’m bored or fuck something up.

Yes, former sysadmin.

This is very similar to how I run mine, except that I use Ceph instead of ZFS. Nightly backups of the CephFS data with Duplicati, followed by staggered nightly backups for all VMs and containers to a PBS VM on a the NAS. File backups from unraid get sent up to CrashPlan.

Slightly fewer retention points to cut down on overall storage, and a similar test pattern.

Yes, current sysadmin.

I would like to play with ceph but I don’t have a lot of spare equipment anymore, and I understand ZFS pretty well, and trust it. Maybe the next cluster upgrade if I ever do another one.

And I have an almost unhealthy paranoia after see so many shitshows in my career, so having a pile of copies just helps me sleep at night. The day I have to delve into the last layer is the day I build another layer, but that hasn’t happened recently. PBS dedup is pretty damn good so it’s not much extra to keep a lot of copies.

I use Duplicati for my backups, and have backup retention set up like this:

Save one backup each day for the past week, then save one each week for the past month, then save one each month for the past year.

That way I have granual backups for anything recent, and the further back in the past you go the less frequent the backups are to save space

I do not as I cannot afford the extra storage required to do so.

Backup all of my proxmox-LXCs/VMs to a proxmox backup server every night + sync these backups to another pbs in another town. A second proxmox backup every noon to my nas. (i know, 3-2-1 rule is not reached…)

Assuming it is on: Daily

I have

- Unraid back up it’s USB

- Unraid appears gets backed up weekly by a community applications (CA app backup) and I use rclone to back it up to an old box account (100GB for life…) I did have it encrypted but seems I need to fix that…

- Parity drive on my Unraid (8TB)

- I am trying to understand how to use Rclone to back up my photos to Proton Drive so that’s next.

Music and media is not too important yet but I would love some insight

Right now, I have a cron job set to run on Monday and Friday nights, is this too frequent?

Only you can answer this. How many days of data are you prepared to lose? What are the downsides of running your backup scripts more frequently?

Daily backups here. Storage is cheap. Losing data is not.

I continuous backup important files/configurations to my NAS. That’s about it.

IMO people who redundant/backup their media are insane… It’s such an incredible waste of space. Having a robust media library is nice, but there’s no reason you can’t just start over if you have data corruption or something. I have TB and TB of media that I can redownload in a weekend if something happens (if I even want). No reason to waste backup space, IMO.

Maybe for common stuff but some dont want 720p YTS or yify releases.

There are also some releases that don’t follow TVDB aired releases (which sonarr requires) and matching 500 episodes manually with deviating names isn’t exactly what I call ‘fun time’.

Amd there are also rare releases that just arent seeded anymore in that specific quality or present on usenet.So yes: Backup up some media files may be important.

Data hoarding random bullshit will never make sense to me. You’re literally paying to keep media you didn’t pay for because you need the 4k version of Guardians of the Galaxy 3 even though it was a shit movie…

Grab the YIFY, if it’s good, then get the 2160p version… No reason to datahoard like that. It’s frankly just stupid considering you’re paying to store this media.

This may work for you and please continue doing that.

But I’ll get the 1080p with a moderate bitrate version of whatever I can aquire because I want it in the first place and not grab whatever I can to fill up my disk.

And as I mentioned: Matching 500 episodes (e.g. Looney Tunes and Disney shorts) manually isnt fun.

Much less if you also want to get the exact release (for example music) of a certain media and need to play detective on musicbrainz.Matching 500 episodes (e.g. Looney Tunes and Disney shorts) manually isnt fun.

With tools like TinyMediaManager, why in the absolute fuck would you do it manually?

At this point, it sounds like you’re just bad at media management more than anything. 1080p h265 video is at most between 1.5-2GB per video. That means with even a modest network connection speed (500Mbps lets say) you can realistically download 5TB of data over 24 hours… You can redownload your entire media library in less than 4-5 days if you wanted to.

So why spend ~$700 on 2 20TB drives, one to be used only as redundancy, when you can simply redownload everything you previously had (if you wanted to) for free? It’ll just take a little bit of time.

Complete waste of money.

I prefer Sonarr for management.

Problem is the auto matching.

It just doesnt always work.

Practical example: Looney. Tunes.and.Merrie.Melodies.HQ.Project.v2022Some episodes are either not in the correct order or their name is deviating from how tvdb sorts it.

Your best regex/automatching can do nothing about it ifLooney.Tunes.Shorts.S11.E59.The.Hare.In.Trouble.mkvshould actually be namedLooney.Tunes.Shorts.S1959.E11.The.Hare.In.A.Pickle.mkvto be automatically imported.At some point fixing multiple hits becomes so tedious it’s easier to just clear all auto-matches and restart fresh.

It becomes a whole different thing when you yourself are a creator of any kind. Sure you can retorrent TBs of movies. But you can’t retake that video from 3 years ago. I have about 2 TB of photos I took. I classify that as media.

It becomes a whole different thing when you yourself are a creator of any kind.

Clearly this isn’t the type of media I was referencing…