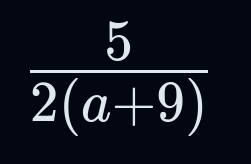

I picked example without confusion on purpose, because most people will generally avoid patterns similar to what OP posted. But if you want something more ambigious:

This is clearly 5/(2 * (a+9)). If we write this the form that the OP uses: 5/2(a+9) - it’s fucked beyond all recognition.

Me, a procrastinator: eh, it can wait